Latest News

- Home

- Blog

Creating a Llama or GPT Model for Next-Token Prediction

This article is divided into three parts; they are: • Understanding the Architecture of Llama or GPT Model • Creating a Llama or GPT Model for Pretraining • Variations in the Architecture The...

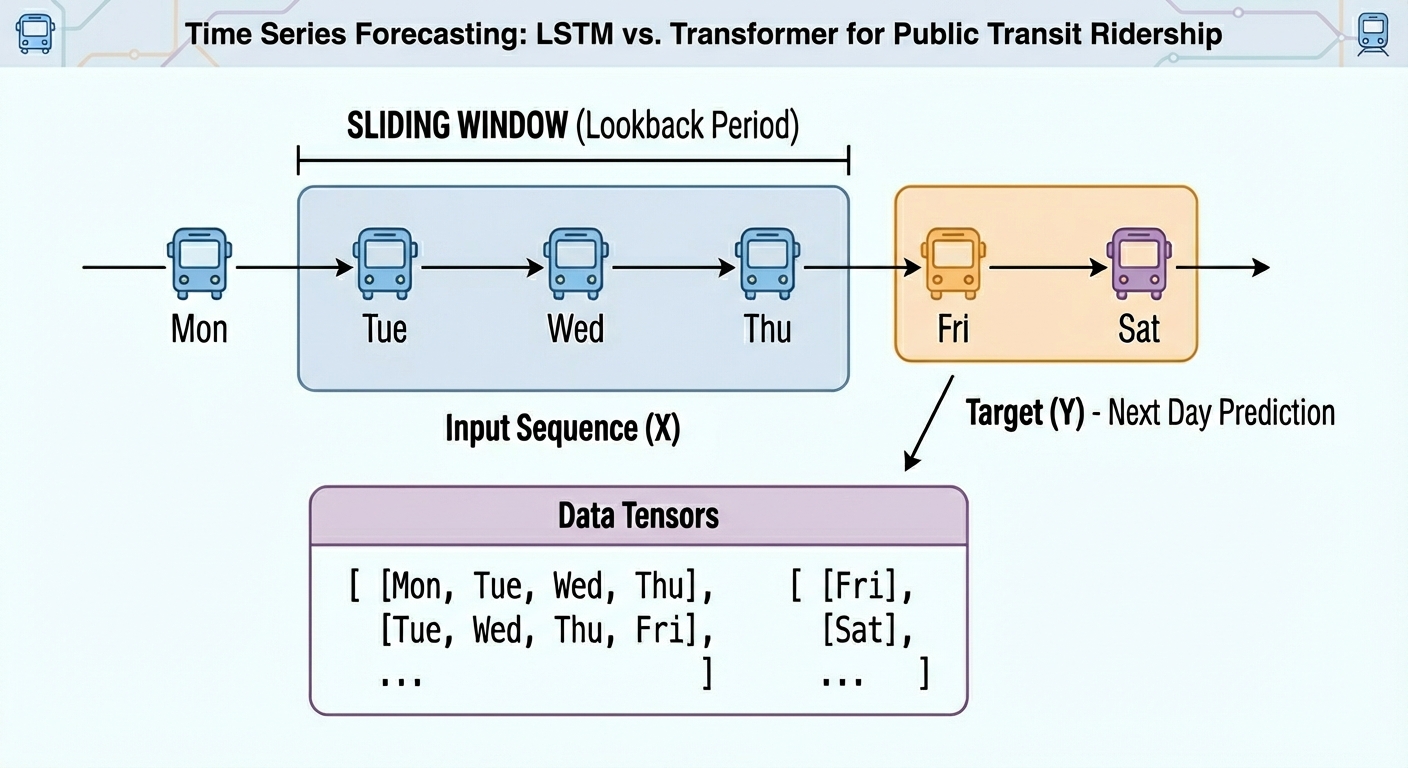

Read More...Transformer vs LSTM for Time Series: Which Works Better?

From daily weather measurements or traffic sensor readings to stock prices, time series data are present nearly everywhere.

Read More...The Newest Google Nest Doorbell Is Over 25% Off Right Now

We may earn a commission from links on this page. Deal pricing and availability subject to change after time of publication.The Nest Doorbell (Wired, 3rd Gen) is currently selling for $132, down from...

Read More...Pretrain a BERT Model from Scratch

This article is divided into three parts; they are: • Creating a BERT Model the Easy Way • Creating a BERT Model from Scratch with PyTorch • Pre-training the BERT Model If your goal is to create a...

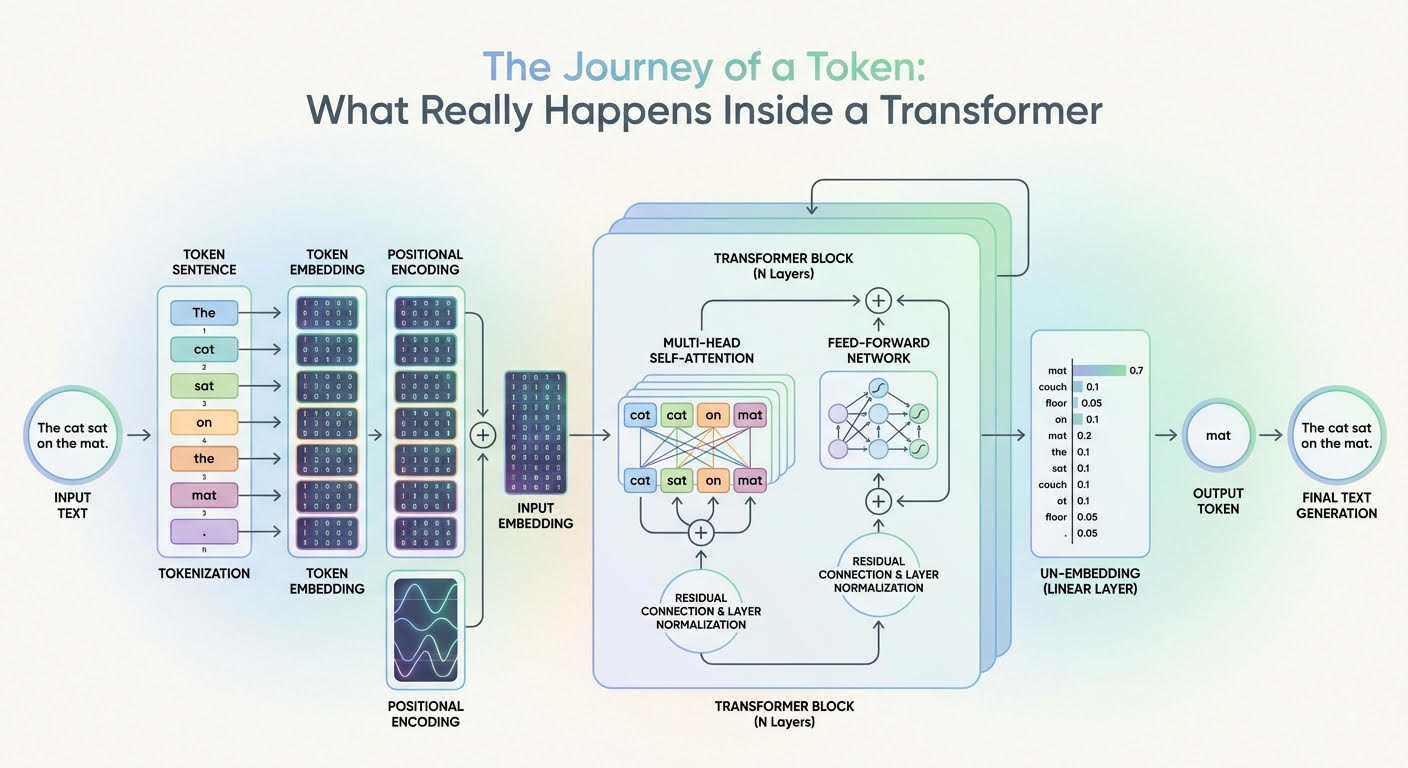

Read More...The Journey of a Token: What Really Happens Inside a Transformer

Large language models (LLMs) are based on the transformer architecture, a complex deep neural network whose input is a sequence of token embeddings.

Read More...

By Adrian Tam

By Adrian Tam